Notes on AI

A snapshot in time

Let’s pause for a second right here

I don’t think we as a society are collectively talking about AI enough, and it’s time someone made the brave choice of giving this niche topic the attention it deserves.

Hah.

Because trends in tech move so quickly - remember NFTs? - and because trends within AI seem to move even quicker, I thought it would be a good idea to note down all my thoughts on AI right now, as a snapshot of what I think and feel about it in this particular moment in time. It might be fun to see how these notes read even a year into the future, when the bubble is sure to have burst the tech landscape is sure to be substantially different.

Another reason I felt like noting this stuff down is that there’s so much variance among folks in their opinions and uses of AI, depending on who you ask, and I want to capture where I stand at this moment. Because it seems to me that the tech landscape currently exists in a world of extremes. There are people who don’t use AI at all while others who’ve already integrated it deeply into their digital setup. There are people up to speed on the latest behind-the-scenes drama at OpenAI down to the hour, while others who wouldn’t even notice if ChatGPT was nuked tomorrow. There are VC’s pumping millions into every startup that mentions “agentic workflows” on its website while big tech is simultaneously laying off thousands of employees. There are people who think AGI will save us while others are sure it’s going to bring about the literal destruction of humanity. There are people who believe we’ve created a new consciousness while others are frustrated they can’t get their LLMs to write decent code. Some are sick of hearing the term AI itself while others can’t get enough of it.

“It was the best of times, it was the worst of times, it was the age of wisdom, it was the age of foolishness, it was the epoch of belief, it was the epoch of incredulity, it was the season of light, it was the season of darkness, it was the spring of hope, it was the winter of despair…”

- Charles Dickens, inadvertently describing 2025

Maybe the only thing tying us all together in this giant cacophony of technological and social change is that each one of us has their own takes on it. Here are mine.

I’m sure a lot, if not most, of my opinions below will look embarrassing in the future, if they don’t already, but that’s kind of the point.

My takes on everything AI adjacent as of November 2025, not that anybody asked

Am I an expert on AI?

Oh hell naw. While I have done some basic machine learning projects in my past academic life I’ve since forgotten everything I learned by doing them. (Classic me!) I couldn’t tell you the first thing about how an LLM works (me at a party: “so, uhh, it’s a neural network trained on a corpus of text, and it basically predicts, like, the next token, y’know…”), have barely a clue what terms like “alignment” and “scaling” mean beyond their literal definition, and at the moment am perfectly fine using LLMs without really understanding them very deeply, or at all.

—

How up to date am I about what goes on in the AI world?

Quite enough, I think. I do end up hearing about most major product launches, new models that claim to have beaten all the benchmarks, deals, acquisitions, and even AI-adjacent Tweets and memes and interview clips that go viral, simply through Twitter and the blogosphere. That said, I don’t follow any of the actual research these labs are doing, nor do I read any papers, and nor am I personally in touch with anyone in this space the way a lot of folks online seem to be.

—

Do I find AI to be a genuine positive for society? Is it actually a useful technology or just all empty hype?

It’s absolutely useful and I can’t believe there are people who think otherwise. An AI chatbot is literally an omniscient machine trained on all of humanity’s written knowledge that will endlessly, patiently answer any questions you have about almost any topic or situation: legal, medical, technological, personal, and the like, all the while keeping your previous questions in its context window so that the conversation naturally flows. It’s the kind of stuff you’d dream about in a science fiction novel. It’s the distribution of intelligence itself, and arguably the most democratizing technology to have become mainstream since the internet itself. I can’t imagine going back to an AI-less world. I think artificial intelligence as a term has gained a very particular flavor of negative connotation among certain kinds of people - creatives and artists, especially - who look down on anyone using it, and I do get where that sentiment comes from, but the technology’s overall usefulness vastly outweighs many of the concerns I have about it.

—

What’s so vital about it? What do I actually use it for in my day-to-day life?

I only use chatbots like ChatGPT. I don’t use any AI-based image or video generation tools, agents, workflows, code editors, or APIs. (I’m ancient that way.) I mostly use AI to ask it questions regarding whatever I happen to be curious about that day, new things I’m trying to learn, stuff I need explained, problems I need solved, projects I’m trying to do, programming, stuff I need help remembering, niche topics I want to have a conversation with an expert about, legal/medical documents I need analysed because I don’t understand legal/medical jargon, and so on. Basically, if there’s anything a friend or a quick internet search cannot help me with, I will be asking an AI.

That said, trying to answer “how do I use AI” is a bit like trying to answer “how do I use Google search”, just because I use it so much, you know. So here’s a snapshot of some of my past conversations with ChatGPT - my “AI search history”, so to speak, with all the truly embarrassing queries removed - which hopefully gives you a better idea:

—

So the hype is all real? There’s no AI “bubble”?

I’m not a hundred percent sure on that, honestly. The money being poured into certain AI-related investments and deals is so huge it makes my head spin. (OpenAI and Oracle signed a deal worth $300B earlier this month, and no, that is not a typo.) So while I am bullish on AI just because it’s been a great tool for me personally, I just don’t know enough to speculate whether it will genuinely create as much economic value as these companies hope/assume it will. I wouldn’t be surprised either way.

—

Do I think AGI is here?

I honestly don’t know what the term “AGI” even means anymore. I’ve heard it used in so many different contexts over the years that it’s now lost one coherent meaning to me. I will say that if you went back in time and showed ChatGPT to someone from even, say, 2015, they’d probably call it AGI, no?

—

So if AI is that good, do I think it poses an existential risk to humanity?

I lean towards no simply because it feels too far fetched, too sci-fi-esque to say that “AI is going to kill us all”, but that’s honestly not a good enough reason to have the opinion I have, because we live in an inherently far fetched world now. (The movie Her, which features a sentient and conversational AI, supposedly takes place in the distant future, and took only ten years to become a reality.) However, AI risk is thankfully a very well known and highly discussed topic in these circles, and I assume that the companies building these models have priced and factored this risk into their engineering. Or so I hope.

—

Since I’m a software engineer by profession, do I vibe code?

Nah. Last I checked, AI just wasn’t good enough for that yet. I mean, I do use AI tools when engineering sometimes, don’t get me wrong, and they can be so incredibly helpful, but the thought of letting an LLM directly edit and write to files in my local repository fills me with anxiety. That’s because the one time I gave vibe coding a genuine shot a few months back didn’t go so well.

What happened was that I found an open source web-based tool that I wanted to contribute a simple change to. So I installed Claude Code and starting hacking away to see if it could help me make the change I needed quickly. And yeah, Claude Code is very slick, and works quite smoothly, and while it did solve the core problem at first, it would always miss some improvement or optimization that I needed done subsequently for me consider the fix complete. And so we got into this loop of a) me asking it to modify or fix something it missed, b) either it replying with, “You’re absolutely right!” without actually fixing anything, or it fixing that bug but creating another one as a side effect, c) me pointing out that its changes hadn’t worked, and us going back to step a). After about two hours of us going through this cycle together without reaching a fully working solution, I ended the afternoon by calling it a bunch of slurs and slamming down my laptop in frustration. My conclusion was that if the best model at the time couldn’t help me make a minimal change in a project written in vanilla JavaScript, vibe coding really wasn’t going to help me with anything even slightly more complex. I’m sure the newer models are far better at this now but I haven’t been curious enough to try.

—

Are LLMs actually conscious, like many people seem to think? Do they actually “understand” what they’re saying?

LMAO. Just put the next token in the bag, bro.

—

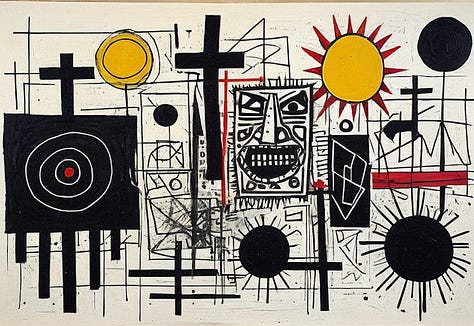

Do I think AI is capable of making real art?

On a long enough time horizon, yes, I think so.

This is a hot take because we like to consider the ability to make art of any kind as something distinctly human, but looking at the rate of progress in AI, especially among the image and video generation models, it just seems inevitable that we soon won’t be the only species doing genuinely creative things. (We’ve been here before: we used to be the only species that played chess, but since about thirty years ago we’ve stopped being even the best at it.) And trust me, I don’t say this with glee at all. But it was this ACX blog post, where thousands of readers were asked to guess whether a bunch of paintings were made by humans or AI, that really changed my mind. I did very badly at the test myself and encourage you to try it as well. I’m not saying that any of the AI-generated paintings I’ve shown above (which are from that very post) are Real Art, per se, but that they resembled human-made art closely enough that it’s no longer outside of my overton window to think that an AI model could someday make a great painting or even a great song. (Great movies or novels might be quite further away. God, I hope…) I think that when people say that “AI can never make real art”, they are only thinking about its current capabilities and are at least a tad driven by insecurity. And honestly, I get that. The fact that AI could someday be as creative as humans doesn’t really fill me with joy, but then again, I would’ve said the same for chess had I been able to talk when Deep Blue bested Kasparov, and I would’ve been wrong.

Now, the question of who made an AI-generated work of art is another one entirely. What percentage of the credit should go to the prompt engineer, to the underlying AI model (and thus, by extension, to the researchers and programmers who built it), and to the millions of works of art the AI likely scraped to make its vision come true? This is a question that deserves its own post, though here’s a thoughtful essay that should serve as a good starting point to think about this thorny issue.

—

Is AI going to take away all of our jobs?

I don’t know about the short term, and definitely not “all” of our jobs, but yes, it will make a bunch of jobs obsolete, which in fact seems to have happened in certain professions already. On a long enough time horizon, however, I think it’s just the next wave of automation that will grow both the size of the economy and the number of things people are enabled to do to a large enough extent that it will end up creating far more jobs than it kills, just like every automation technology did before it.

—

Am I concerned about the water usage and environmental impact of AI, as a lot of people seem to be?

No.

—

Okay, if not environmental, do I have any other concerns about it?

Oh, so many. There are thousands of people out there who are deeply attached to their AIs to a worrying extent, which is sure to affect society and culture in ways we can’t even predict right now. The internet is getting actively worse because of the deluge of AI slop content that’s pervading every social media platform these days. Deepfake as a technology also scares me because I genuinely can’t think of a single positive use case for it. The growing prevalence of AI-enabled scam phone calls and bot replies is also gross, and is making our digital environments increasingly lower-trust and not as fun to inhabit.

—

Do I think the positives of AI outweigh all these concerns?

Yeah, it’s a technology, and any technology is going to have positive and negative use cases. These are all genuine problems and I don’t know the fixes for them - if I did, I probably would have more money than I do right now - but I don’t think it makes the technology itself necessarily evil. Maybe this sounds banal but I still think it’s true.

—

And what do I think of the myriad of different AI products out there?

That’s what the next section is about.

My thoughts on every popular AI product as of November 2025, sorted from least to most used by me

Windsurf, Lovable, Llama, Cursor, Deepseek, NotebookLM

These are five entirely different products made by separate companies, but I grouped them together because they all have something in common: while I have heard of each of these, I’ve never used them more than a couple of times, if at all, and so they’re mostly just product names in the AI space I’ve vaguely heard of.

I first started a draft of this post in early 2025 and most of these products were in the zeitgeist back then, hence their addition here, but at this point I barely recognize some of their names. Still, thoughts on each below.

This is an AI code editor I’ve only heard about because, earlier this year, OpenAI was set to acquire this company for like $3B, but then Google snatched them up, and this became a huge story because a lot of Windsurf employees apparently got screwed over in that deal.

This is also an AI IDE whose name rings a bell, but I’ve never used it, nor heard anyone talk about it online or IRL in a long, long time, i.e. a few months.

All I know is that this is Meta’s large language model that developers seem to use mostly through the API and not a user facing client. It is integrated into all their products, though, even WhatsApp, but I’ve never used it, and find forced AI integration into consumer apps generally annoying with every few exceptions, this not being one of them.

This is an IDE that lets you use any underlying AI model you want to help you vibe code. Of all the AI code editors, I’ve always had the vague sense that this is by far the most popular one, without knowing any actual usage numbers.

It being “just a VS Code fork” is kind of a meme at this point.

I did download it once, but because of my distaste for vibe coding - see the previous section - I never did anything significant with it, and so I can’t speak to its quality.

Deepseek was a state-of-the-art model created by a Chinese company, and was in fact the first major AI release from a country that wasn’t the US. This caused all the podcastbros and Twitter anons to collectively lose their shit, because it was the first time that people seemed to really consider the possibility that the US could potentially lose the AI race. As of right now, though, that seems impossible.

I remember that asking Deepseek about Tiananmen Square became a huge meme around the time of its release, because that was the one topic it would decline to answer any questions about. People considered this a gotcha about Chinese censorship while forgetting that all the American AI models we use are heavily censored - “lobotomized”, they call it - as well.

I did try it out once and didn’t have any strong opinions on it.

NotebookLM is a chat based tool by Google that uses Google’s Gemini model under the hood. (More on Gemini later.) The unique thing about NotebookLM is that it’s meant to be used as a research assistant specifically, and allows you to upload more files, PDFs, and web links to it for context than any other tool out there.

I did check it out once, and I can imagine this being very useful to researchers and academics who need to go deep into their niche, but the extent of my depth of research when using AI is asking it questions like “what’s the difference between coriander and parsley”, and that’s how I know that something like NotebookLM is not meant for me.

— — —

DALL·E, Stable Diffusion, Midjourney, Sora

These are, again, a few different AI models that have one thing in common: they were created specifically for image and video generation. I have toyed around with some of these, but not too deeply, and don’t really have anything interesting to say about any of them individually. I just have the vague sense that Midjourney has always been the most popular one of these, though the fact that it’s usable only through Discord makes me never want to use it myself.

That’s not to downplay how unbelievable these models are, though. Image creation to me is when AI feels least like an engineered technology and most like literal magic. What do you mean that I can type in any detailed description as a prompt, and have a computer perform matrix multiplications to create a JPEG image for me - and now, even video (!) - based on whatever I just wrote? It feels far more surreal than text-based token completion.

Because of this I always check out any new image generation product like I’m a five year old looking at a new toy, but just like a five year old I also somehow get bored of it in a few minutes and never use it again. It’s incredible how fast our minds adapt to new realities, how quickly something that once seemed magical becomes mundane.

In spite of my initial fascination, I think I eventually get bored of these tools mainly because I struggle to think of any real use cases for image or video generation in my day-to-day life. The one time I did use this technology was earlier this year, when I wanted to buy some new bedsheets and a duvet. I couldn’t quite picture which colors would look good on my bed, and so I uploaded a picture of my bed to ChatGPT (which has DALL·E inbuilt) and asked it to show me what it would look like in various colors, and it was actually pretty helpful. But that was like six months ago and I’ve never needed to have an image created by AI since then.

— — —

Nano Banana Pro

So Nano Banana Pro is also an image generation model similar to the ones mentioned above. It’s from Google, and uses their latest Gemini 3 model underneath, both of which came out literally this week. I normally would’ve ignored this release, as I was already in the middle of writing this post, but I was so astonished by Nano Banana Pro’s results that not only did I have to add it here but I had to give it its own section.

As you can see above, the images this model can create are so photorealistic, so indistinguishable from a picture I’d normally take from a camera or my iPhone, that they have surpassed my ability to tell AI-images from actual photographs. Those SOBs did it. They actually crossed the uncanny valley.

While I have said that I’m very bullish about AI in general, this particular aspect of it, along with deepfakes, makes me extremely squeamish, and I don’t know what it’s going to do to us as a collective. I think most people tend to be narrow minded when thinking about paradigm-shifting technologies - there are people out there still opposed to self-driving cars, for instance - but I have to confess that with regards to realistic image generation, I myself am at a complete loss as to how it’s supposed to benefit society for the better. Because I can think of a bunch of far reaching implications once this technology becomes more widespread, but they’re all quite dystopian:

Very soon, you will have no way of knowing whether any photo you see on social media, on the news, on a billboard, or anywhere else, is AI-generated or not.

Photographs will not trustworthy evidence anymore, whether socially, culturally, or legally. Profile pictures will mean nothing.

Spams and scams will increase a hundredfold. Identity theft, impersonating someone, phishing, and things of the like will be much easier to do and harder to spot.

You get the idea. Of all the products I’ve mentioned in this post, Nano Banana Pro is both the newest one and the only one that makes me genuinely uncomfortable. And it’s just the first one to be this good; I’m sure OpenAI and the other companies will catch up to its quality very soon. I don’t know how the engineers and researchers at Google feel about this, how they reckon with what they’re making, and how much they’ve thought through its implications. I hope I’m wrong, and that they see productive and ethical uses of this technology that I don’t yet. Let’s move on and discuss other products before I lose my mind any further.

— — —

Perplexity

The unique thing about Perplexity was that it was an internet search engine that didn’t give you search results, but rather a summarized answer using AI. I thought it was pretty cool. I remember playing around with it a bunch when it was hot, asking it things like, “What’s the current status of the Russia Ukraine war?”, and getting a pretty concise, informative answer in response, updated to that very day. Arvind Srinivas as a founder also made some online waves during that time.

That said, at this point, every major AI product seems to have figured out how to search the web and summarize what it found for you. ChatGPT does this without even being explicitly asked to; it figures out whether it needs to look something up based solely on what you say to it, as if you were talking to a friend. And as you might have noticed, most Google searches give you an AI-generated summary at the top, whether you asked for one or not.

And so Perplexity’s moat has disappeared. I don’t know how this company is going to stay relevant. I myself haven’t needed to use the product in quite a while.

— — —

Copilot

Copilot is Microsoft’s AI assistant, which uses GPT underneath as part of their deal with OpenAI, so it’s not really a unique model. Still, it’s clear that Microsoft intends to go all-in on AI and has integrated Copilot into literally every single product they have: Windows, Edge, Bing, and all their Office products.

I find this integration mildly annoying, but there are two use cases for it that I find very interesting.

The first is that if your employer uses the Microsoft 365 suite (Outlook, Teams, SharePoint, Word, Excel, PowerPoint, the whole nine yards), Copilot knows everything you’ve ever written in these products. It can thus act as a personal assistant who can integrate information you entered in different documents, presentations, and emails across the suite, and who thus has all the context to help you with any abstract queries you might have. I don’t personally use this, but can imagine it being a game changer for a lot of people whose job is mostly emailing and writing. So you could ask it things like, “go through all my emails and Teams messages and tell me what my urgent tasks are for this week”, or “summarize every interaction I’ve ever had with a [company] over the last five years”, and so on.

The second use case is more relevant to me. Because Copilot is integrated into GitHub as well, you can install the Copilot extension on VSCode (also a Microsoft product), authenticate yourself with GitHub, and basically use it as an assistant programmer that helpfully autocompletes a lot of code for you.

Getting inline code suggestions like this is very different from the vibe coding phenomenon I ranted about in the previous section, where you give an IDE instructions in English and it just spits out entire files of code for you. Here, you’re still doing all of the thinking and writing 90% of the code, with the AI is just finishing your sentences for you and taking care of some of the manual grunt work.

But it makes so much of a difference because the veracity of Copilot’s (rather, GPT’s) autocomplete is exceptional. It’s not too ambitious, doesn’t suggest anything too wild, but is rather able to extrapolate the next variable name, conditional statement, inline comment, or helper function you’re about to type, based solely on what you’ve typed in so far and on all the other code in the file you’re editing, with shockingly high accuracy.

Once you use this enough, typing all one hundred percent of your code “by hand” starts to feel archaic. (Ask me how I know - I’m currently out of GitHub Copilot free credits and am in great agony.) This has quickly become one of my absolute favourite uses of AI across all domains.

— — —

Grok

Grok is an AI model created by researchers at Elon Musk’s xAI. While I have tinkered with its chat interface a couple of times, I would’ve otherwise never cared about Grok except for the fact that it’s integrated into Twitter exceptionally well. It’s one of the only two features of post-Elon Twitter, the other being Community Notes, that I really love. (It will always be “Twitter” and never “X” to me, though, sorry.)

Basically, every Tweet has a button for Grok, which if you click it, results in Grok elaborating on and explaining that Tweet to you. You can even tag it in any Tweet you write - a lot of replies on that site are basically, “@grok is this true?”, as a form of fact-checking - to which the bot will reply to your question with a researched answer.

Now, Twitter has certain posts that come up once in a while that would make absolutely no sense to most users on their own. That’s because these Tweets are either using a super-niche meme, or they’re implicitly referencing (“subtweeting”) another post that everyone on the TL is currently talking about, or they’re using shibboleths that would only be legible to users on a certain part of the website (eg. football Twitter, film Twitter, and so on). What’s utterly mind-blowing to me is how good Grok is at explaining any Tweet, even the niche kinds mentioned above, whose meanings would not be easy to extrapolate from just text or image analysis. It’s somehow able to factor in the current discourse on the site, the tone a Tweet was written in, and a bunch of other data in its context window when analysing any Tweet, and is thus able to explain almost anything with deadly accuracy and thoroughness. It even spots irony and sarcasm. (Other users seem to feel the same, see here and here.)

Even beyond its Twitter integration, Grok stands out for being the least politically correct, most blunt, and least sycophantic of all the AI models out there, both in terms of its style of writing and the substance of what it says. It does hallucinate and gets things wrong sometimes, especially on the topic of Elon, about whom it will often exaggerate things (what a coincidence!), but it generally seems like the least likely to actively lie or sugarcoat information, which is quite refreshing. That said, my AI usage is pretty tame, and these are not good enough reasons for me to switch over from Claude and ChatGPT.

So while I don’t really use Grok that much, apart from the occasional explanations I need on Twitter, it’s obvious to me that the model is clearly very good. xAi definitely seems to have the sauce. I’m curious to see how they compete with the other companies over the coming years.

— — —

Gemini

Gemini is Google’s own AI model, created presumably by researchers at DeepMind, an AI lab that Google acquired a few years ago. You might recognize DeepMind from the excellent 2017 documentary, AlphaGo. (The entire film is available on YouTube. Highly recommended.)

In some ways, Google’s position is similar to Microsoft’s. Both are trillion dollar companies that sell a bunch of tools to both consumers and businesses, including entire B2B productivity suites. And so, just like Microsoft Copilot, Google has also decided to integrate Gemini into every single one of their products, including Google search, Gmail, Drive, Sheets, Docs, and so on. Gemini now comes with its own app as well.

Although my entire personal life runs on Google’s products, I’ve never once used any Gemini feature through them, even though Google seems intent on shoving it down my throat just to increase their KPIs.

Well, I do use it in search, I guess, because Google is still my goto search engine, and it now gives you an AI-generated result for most of your search queries. I’ve sorted all the AI products listed in this section from the least to most used by me, and the only reason Gemini is this close to the end is because I technically (am forced to) use it very frequently simply by virtue of using Google search.

Now, Gemini’s summaries are well written and often helpful, obviously, but I find it incredibly overused, and wish there was a toggle to turn it off by default. That way I could opt-in to seeing it only if I want to. Most of my searches are simple enough to not require any AI involvement at all, and so I find that wall of generated text that appears every time I search something to be both wasteful and aesthetically ugly.

The only use case where I do like it is in the realm of programming. I’ve often Googled stuff related to a certain API or language, expecting to be taken to the relevant documentation page to get what I need, but had Gemini give me the exact documentation spec I needed on the results page itself.

However, it’s gotten to an extent that even Googling something like “what time sunset today” generates an AI summary, even though Google has always given me the time for such a query on the results page itself. C’mon, Gemini, just chill out a bit, man. But anything to make those usage metrics go up, I guess.

Among all the big tech companies, Google’s journey in the AI race has been the most interesting one by far.

In the few months after ChatGPT came out, and the entire industry was clamoring to get in on the AI hype train, Google was nowhere to be found. When they did release their first model, Bard, I remember it was mocked online for being inaccurate and hallucinatory almost to a point of parody.

Google then either scrapped or rebranded Bard, and pivoted to an entirely new multimodal model, Gemini. At first they continued to be dominated by OpenAI and Anthropic, but each Gemini version release has been significantly better than the last.

Add to this the fact that Google has billions of monthly active users, and thus has both the best dataset to train its models on and the best distribution network to launch them into.

Which all brings us to Gemini 3, the latest version, which came out just this week, which the aforementioned Nano Banana Pro also uses, and which is currently supposed to be the best model out there based on all benchmarks, and you just know that Google has awakened and that things are about to get very interesting.

So, in just two years, Google went from having the worst model in tech to the best one. Its reputation in the AI space has taken a 180 degree turn in that time, to the extent that a lot of people have begun speculating that Google could emerge as the big winner in AI, which is something that was unthinkable even a year ago. Talk about a comeback, huh.

— — —

Claude

Anthropic’s Claude is one of the two AI tools I’ve used deliberately and regularly for at least the last year or more, and is the second-last entry on this list.

Claude was launched only a few months after ChatGPT, and was the second major AI product to really make some waves in tech, way before the space was crowded with this many options. With each update, it began developing a reputation for being far better than its competitor for technical tasks like programming, and so naturally I decided to check it out.

When I signed up for Claude, ChatGPT was the only tool that I had to compare it against, and it was refreshingly different in just about every way. It looked unmistakably unique, with its sans-serif font and its light-brown-ish color palette. It wrote differently too: more formally than GPT, but also in a more opinionated fashion. It just gave the vibe of being like a weightier tool to use, somehow, and not in a pretentious way.

The claims about Claude being better at technical tasks turned out to be very true. You could instantly feel the difference even from a couple of conversations, especially if you gave it code to work with. It was far sharper at debugging errors. The code it wrote was just better. It explained technical things with more flair. (And this was back when the models weren’t half as good as they are now, so even minor improvements felt like huge leaps in performance.)

I was quite used to ChatGPT though, and didn’t want to switch entirely, but Claude was too useful at certain tasks to be ignored. And so I started using both. ChatGPT became my default assistant to run any quick day-to-day queries by, and to ask anything personal, since it maintained memory, whereas Claude became my “old wise wizard” like mentor that I sought out to go deep into a certain topic, or discuss anything work or engineering related with.

Even outside of programming, I remember uploading my lease document to it to ask it some questions about my renewal. I remember reading a book on economic history, having no clue about the subject, and pasting passages from the book into Claude and asking it to explain them, which is how I was able to actually understand the book and make it through to the end. I remember uploading a blood report to it so that I could have it explain basic stuff from the report to me and ask follow up questions.

All that said: you’ll notice I’ve used past tense to describe everything I have about Claude so far. That’s because its superiority is no longer inarguable; GPT 4 and 5 have caught on significantly, and are as good at all the technical stuff I used to go to Claude for, not to mention the other models that are presumably supposed to be equally competent. In fact, the last couple of times I hit a major technical snag, I started a conversation with both models with the exact same prompt, and it was actually the conversation with ChatGPT that eventually led me to a solution. So as of now, I don’t have a real reason to keep using Claude.

That said, I find the messaging and aesthetics of Anthropic as a company to be far superior than pretty much any other one in the AI space. There’s just something polished about their whole vibe, their comms, and their visual look. Their “keep thinking” video is by far the best work of media to come out of this AI era, and is a testament to how refined and well-crafted everything they do is. I’m sure that that’s a huge part of the reason I got drawn towards Claude in the first place, and why I can never seem to truly let go.

— — —

ChatGPT

OpenAI’s ChatGPT is the AI product that started it all. It launched in late 2022 and has since - I don’t think this is hyperbolic to say - changed the world.

It was my first AI tool as well, and I remember checking it out the very week it launched. What’s surprising is that all these years later it’s still my goto model for most tasks; nothing has fully replaced it. At this point I have its app installed on my personal laptop, phone, work laptop, and tablet.

The months after ChatGPT first came out were quite something. I’ve never seen a product catch fire this quickly. All of social media was talking about it constantly because no one had ever seen anything like it. (Twitter was especially on fire, because it has a lot of SF/tech/AI adjacent folks who actually work in the industry and understand the technology better than most. Twitter in general has always been ground zero for any AI discourse, news, or breakthrough, and if you care about this field even slightly you should be on there.)

ChatGPT then reached 100 million monthly active users (!) in just two months (!!) and is the fastest product to ever do so.

It’s been exactly three years since its launch and I think it’s fair to say that ChatGPT is still the most significant AI product in the entire world, be it technically or culturally. I imagine that for a lot of non-technical folks, ChatGPT is AI, sort of like how Xerox is photocopying.

You can feel its cultural significance even if you just pay attention outside. It’s the one product you consistently see in the wild. Every time I’m at a café where people are working, I notice that at least a few laptops have ChatGPT opened on them. It’s the only model you know for a fact that anyone you talk to has likely heard of. It’s the only model I’ve overheard people on the street talk about, and I don’t even live in SF.

If I had to judge the popularity of AI products based only on my lived experiences and not any online data, I would have to assume that ChatGPT is the only thing out there. The others don’t even come close to its level of penetration.

OpenAI clearly had first-mover advantage when they launched ChatGPT, but that’s not even close to a complete explanation for why it’s still so dominant. The interesting thing about this is that first-mover advantage is historically not the norm in tech at all; in fact, many would call it a disadvantage. Google wasn’t the first search engine to hit the market, Facebook wasn’t the first social network, yadda yadda yadda. Most monopolies that we now know of were actually products that knocked out many incumbents. Which makes OpenAI’s reign even more impressive. Sam Altman might be the GOAT.

I will say that although ChatGPT has often not been the best AI model in every single aspect - Grok, Claude, and Gemini have, at points, been superior at certain specific things - it’s always been the best overall product to not make those edge cases matter much.

I’m not an AI expert and can’t speak to its various models’ qualities - they’ve always been generally near the best, both in my experience and in terms of benchmarks - but what I can say is that all the non-AI-related engineering OpenAI does is exceptional too. Every time they’ve launch a new interface to use ChatGPT through, whether the iPhone app, or the Windows desktop app, or voice mode, it’s been flawless. It just works.

This is one area where other products often fall behind. When I first installed the Claude desktop app, for instance, it often didn’t work, or would be slow, and so on. I hardly ever faced a tech problem like this with ChatGPT.

OpenAI has also always been the most innovative at adding new features that make a real difference as well. They came up with personalization and custom instructions, the ability for users to upload files, web search, voice mode, and projects, and these are just features I can recall off the top of my head. To be fair, OpenAI might not have been the first to ship each of these features, but they were the ones to launch a very solid version of each and make them mainstream, sort of like what Edison did with the lightbulb.

Having used it this long, I think the personalization feature might be ChatGPT’s moat. Because it remembers things about you across conversations, and because you can give it certain preset instructions that’ll apply to all conversations, you’re not just talking to an AI but an AI that knows you and what you want, and can thus tailor its responses to your preferences. This adds up significantly over time, because you don’t need to give it new context every time you start a new thread; it just knows what assumptions to make. And once you have enough of a history with it, you can ask it personal questions about yourself and your life - everyone’s favourite topic! - and get shockingly insightful answers.

I’m not going to share examples of personal conversations I’ve had with it here, but reach out if you want to know.

No wonder it’s being used as a therapist and for emotional support so widely.

If you think it’s terrifying how much data Google has about you, don’t even think about what OpenAI has. Please don’t sell my deepest insecurities to advertisers, Mr. Sam Alt Man.

I also need to emphasize how good ChatGPT’s voice conversation mode is. It’s fast enough, and the text-to-speech is natural enough, that you feel as if you’re talking to a real person, man, it’s uncanny. The film Her is a reality now.

ChatGPT is even more significant to me as a Twitter person, because OpenAI is a constant topic of discussion there often due to internal political drama. The timeline when Altman was fired as CEO by the OpenAI board only to come back a few days later was especially insane.

I won’t say ChatGPT is a perfect product though. It never has been. For starters, their model names are terrible and I can never keep track of what’s what. Some of their models have also been sycophantic and agreeable to a worrying degree. But none of that stuff really matters too much in the long run, at least to me. It’s been such a game changer for me and is so deeply integrated into my life that I probably will end up asking it what I should wear for my own funeral.

And that’s all I got

Having said so much, my only parting thought on the topic of AI for now is that I’m amazed to live in a time where wonderful technology like this can exist and am grateful for all those who have helped make it happen.

this is so well thought out and written! I love the way you've "journalled" this article :)

Beautifully elaborated brother. For someone like me who uses AI for different reasons, this post gave a nice insight into what AI essentially is capable of